Data Vault – Perfect Consistency without Referential Integrity

Each data base management system has a validation feature for referential integrity to ensure data consistency. When implementing a Data Vault 2.0 system, often this feature gets disabled to speed up the data load. An easy possibility to automatically ensure consistency in your data vault is shown in this article.

The Hashkey-Concept of Data Vault 2.0 allows loading entities (hub/statellite combinations or links) completely decoupled from each other. Also when there are direct relationships between them. This brings benefits when integrating data from multiple source systems and when data should be available for end users much quicker.

Using that concept is only possible when the referential integrity (RI) between tables in your data base management system is deactivated. Otherwise it wouldn't be possible to load a Link-Table before loading the Hub-Tables that the Link-Table references.

The referential integrity features of an RDBMS is really important because records that point to records that do not exist are not thinkable in a trustful data source for analytics and decision making.

What you need is an own housekeeping-process that validates and ensures referential integrity. But instead of writing your own solution, there is a much easier and efficient way solving this problem using the Data Quality Automation suite BiG EVAL. In a standardized test case, BiG EVAL tries to resolve references by looking up each and every Hashkey, it is able to find that ones that are not valid. Using a simple BiG EVAL script, the test case gets automatically applied on all Links (Link to Hub references) and Satellites (Satellite to Hub references). Activated once, it automatically covers all relationships of the data vault. Also when new relationships get added in the future.

Implementing the consistency check with BiG EVAL Data Vault

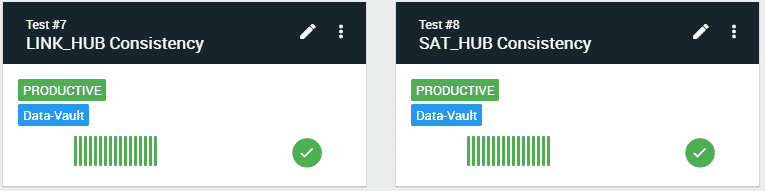

You need two new testcases. One for checking Link to Hub relationships, and the other one for checking Satellite to Hub relationships. This article only shows you the Link to Hub relationship test case. But you can build up the other one using the same technique. And you can download all scripts for that kind of test case at the end of the article.

In the first step we only implement one single Foreignkey - Primarykey relationship of a LINK-Table. The following chapter then shows you how to automatically spread that testcase onto all LINK-tables and all their relationships.

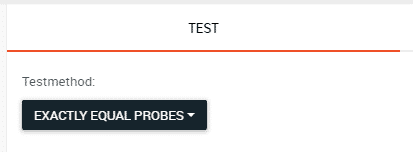

Let's start with a new test case that uses the testmethod "Exactly Equal Probes".

First Dynamic Probe

Add a new dynamic probe to the test case. The probe queries the LINK-table and uses a LEFT OUTER JOIN to link to one of the corresponding HUB-Table. In the WHERE-clause, we filter out records, that habe no records on the right side (Hub-Table) of the LEFT OUTER JOIN.

SELECT DISTINCT

L.hk_h_customer AS hk_h

FROM

dv.l_customerorder AS L

LEFT OUTER JOIN dv.h_customer AS H ON

H.hk_h_customer = L.hk_h_customer

WHERE

H.hk_h_customer IS NULL

Second Dynamic Probe

The first probe must return zero (0) records to make the test result successful. That's why we need a second probe that simply returns an empty dataset where we can compare the result of the first probe against.

SELECT TOP 0 CAST(NULL as binary(20)) AS hk_h

Running the Test Case and review the Result

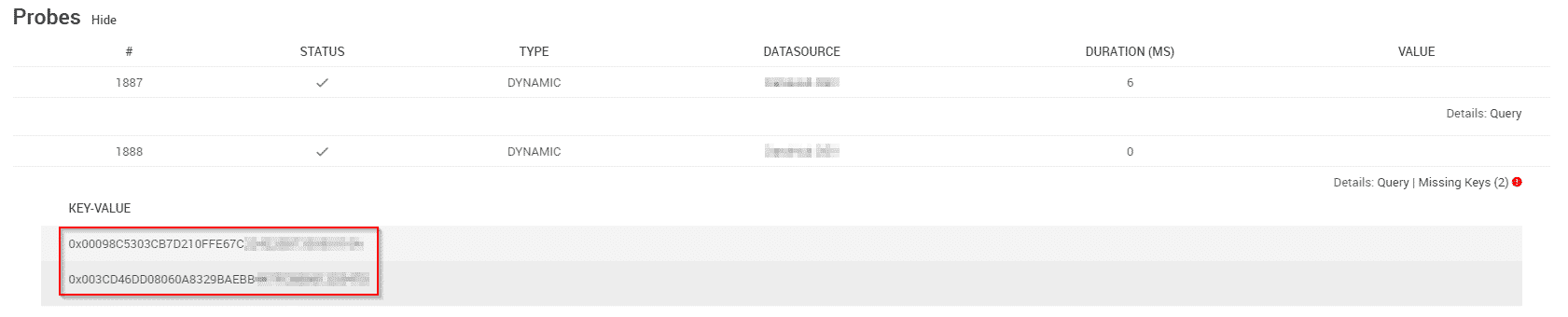

Run the testcase and check whether it succeeds. When it fails, it shows you a list of Hashkeys, that couldn't be found on the primarykey-side of the relationship. So you can further analyze the problem.

Automating the Test Coverage with Data Vault

Now we can take charge of automating the test case in a way that it automatically gets run for all relationships in all LINK-Tables of the data vault. Here we start using the scripting features of BiG EVAL.

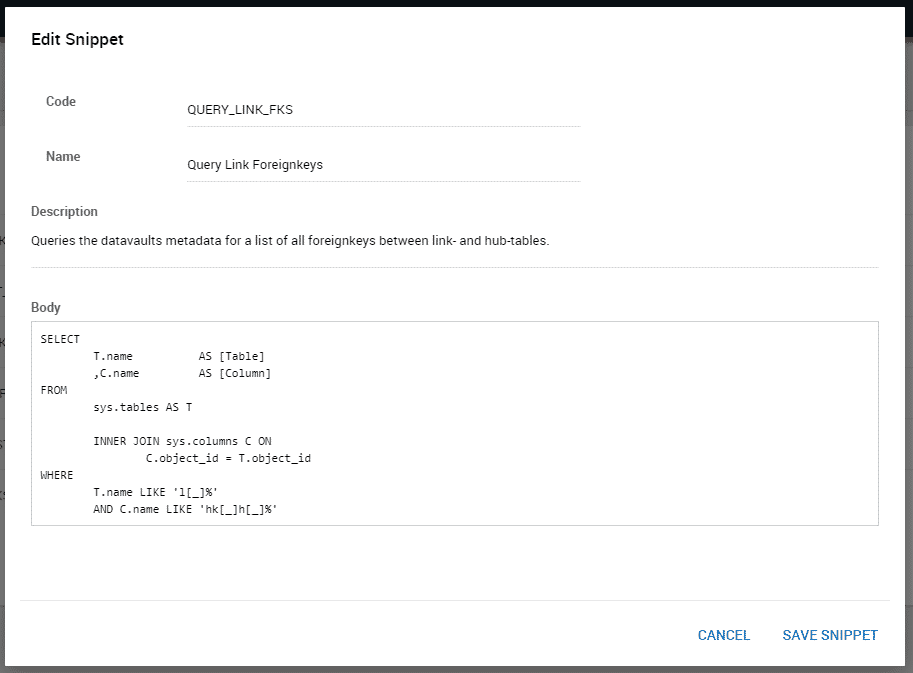

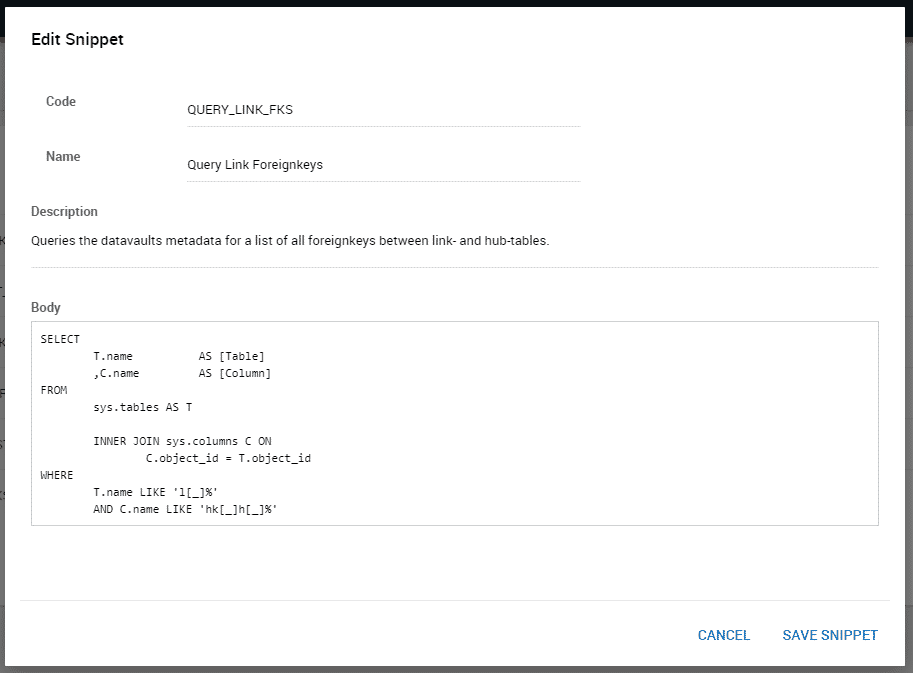

Snippet for querying meta data

First we need to query the meta data of the data vault. We need a list of all foreignkey-hashkeys in all LINK-tables. Create the following snippet that contains a query that does exactly that. It will be used in the control-script of the test case later on.

Source Code

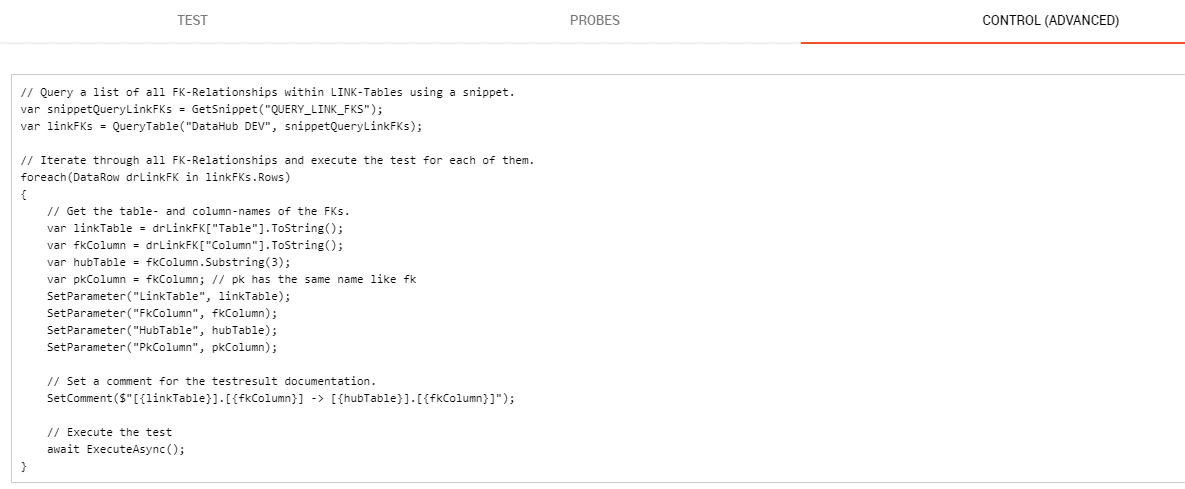

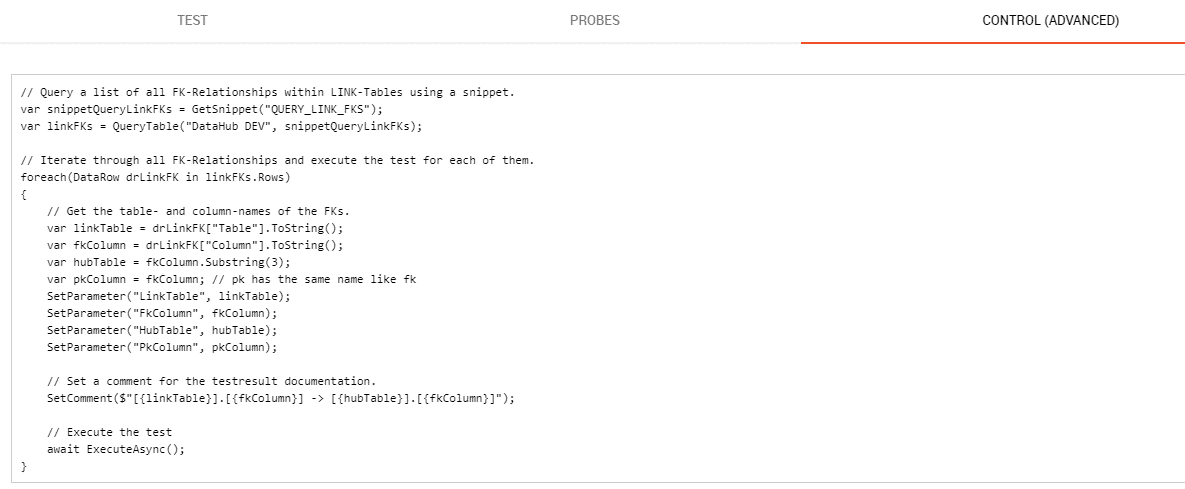

Test Case Control Script

Using the control-script (Tab CONTROL in the test case editor) a test case can be run multiple times. First, the control script uses the metadata query of the snippet built before to query the list of foreign-keys in all LINK-tables. The script then iterates over all these relationsips. In each iteration, it runs the the SQL-Queries of the probes that get parametrized in the next step to validate the relationship.

Source Code

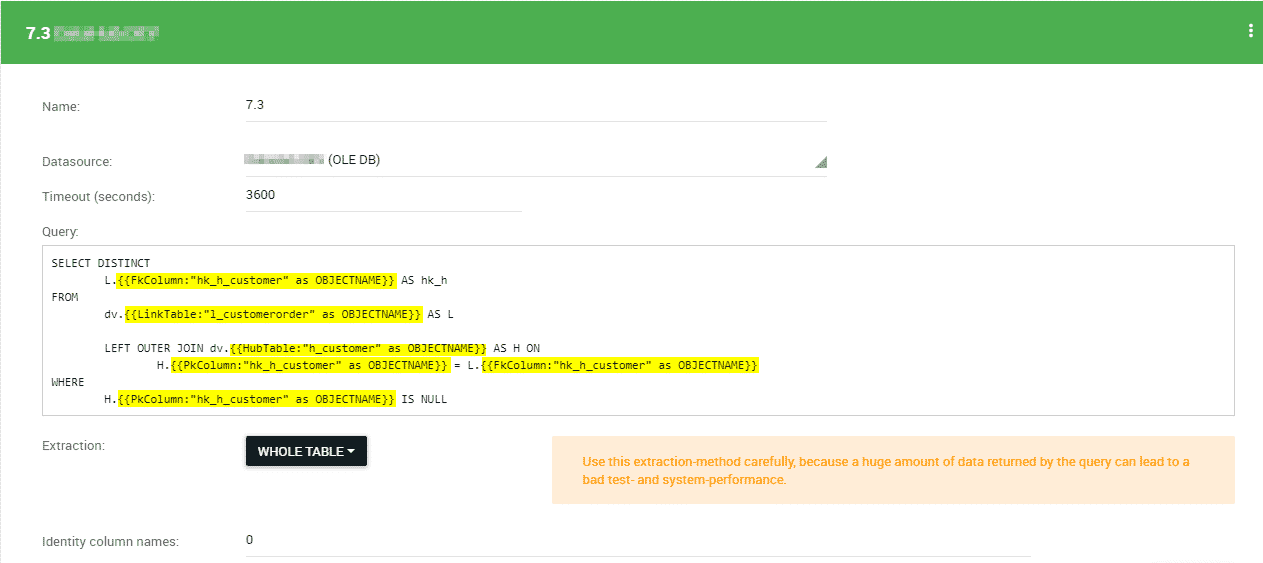

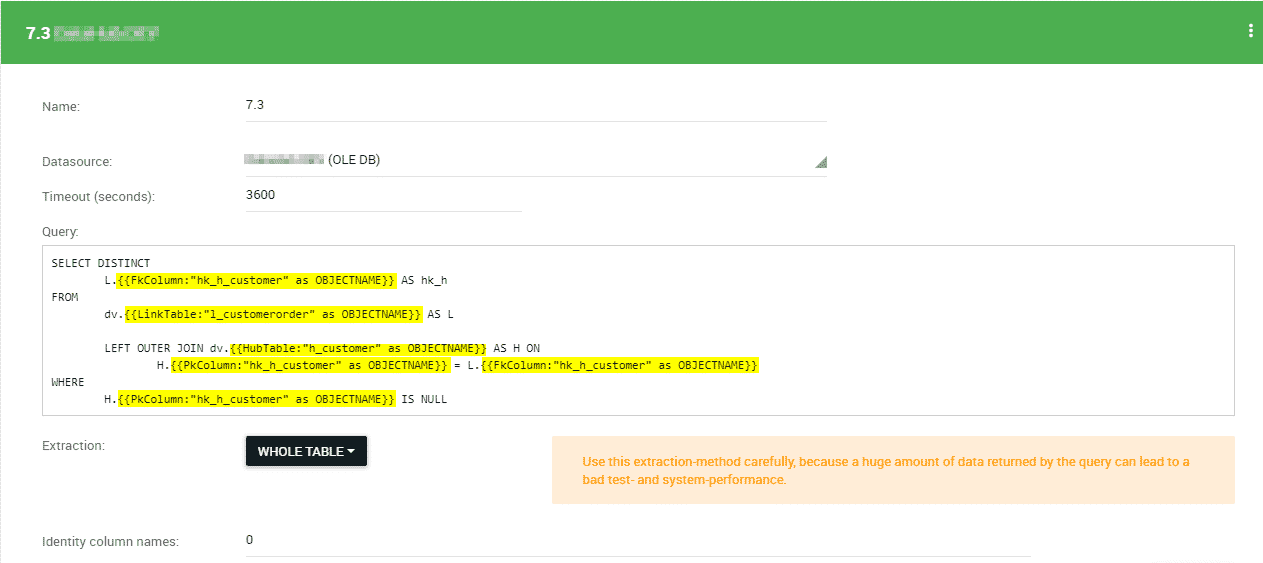

Make the probes dynamic

The parameters that get set in the control-script of the test case can now be used in the SQL-query of the first dynamic probe. This makes the SQL-query dynamic in order to run them against different database objects like LINK- and HUB-tables. The yellow marked parts in the following picture show the dynamic parts of the SQL-query.

Source Code

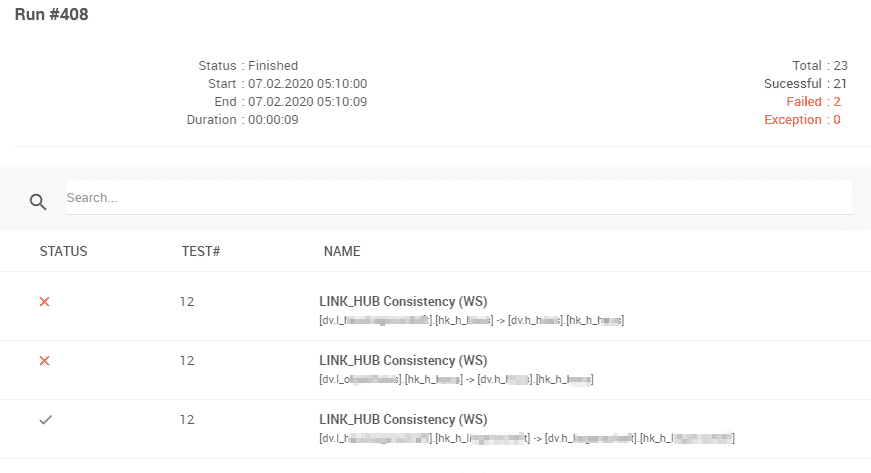

Running the test case and review the results

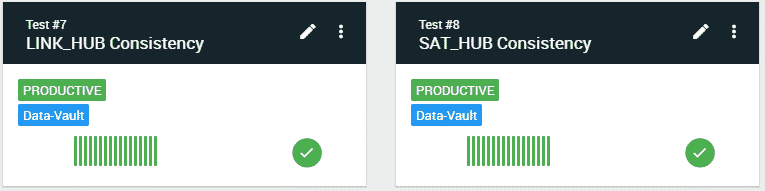

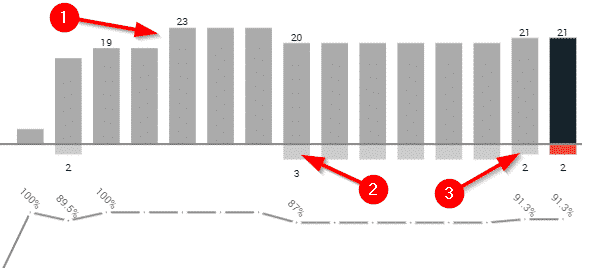

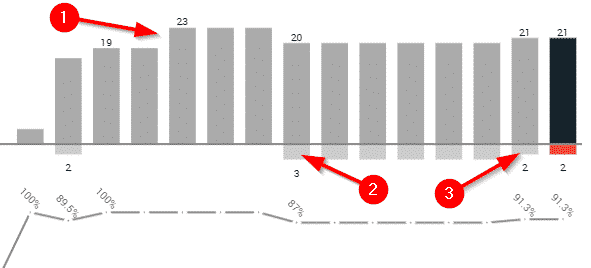

When you run the test case after all these modifications, you can see in the main dashboard, that it validates all relationships of all LINK-tables. The same test case gets run as many times as there are relationships of this kind.

At point (1) one added new entities to the data vault, what automatically lead to new four new test case runs against the new relationships. The test coverage is automatically ensured by that.

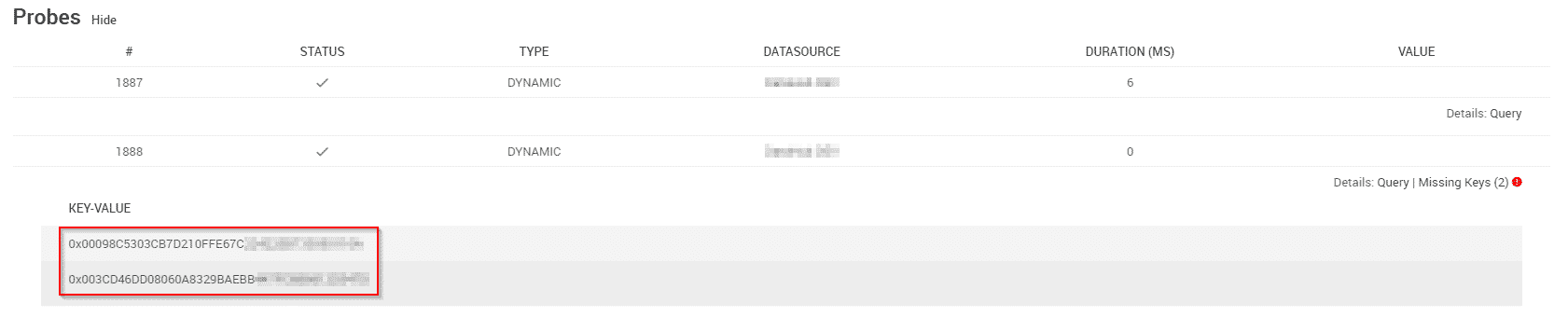

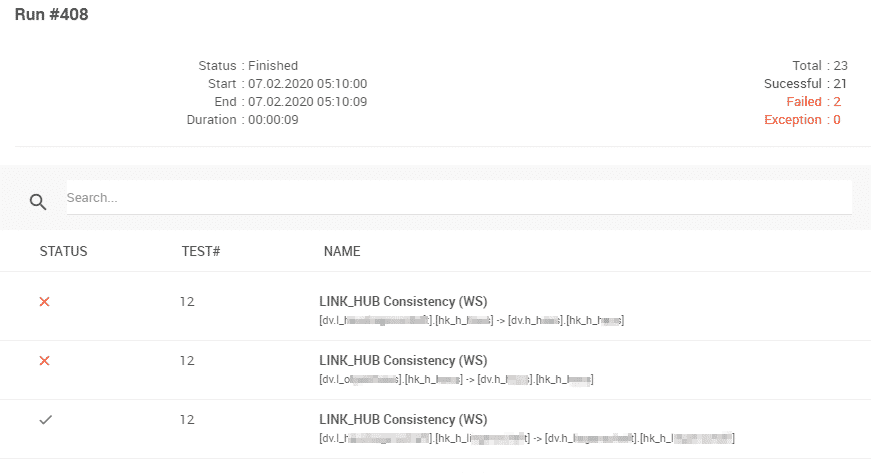

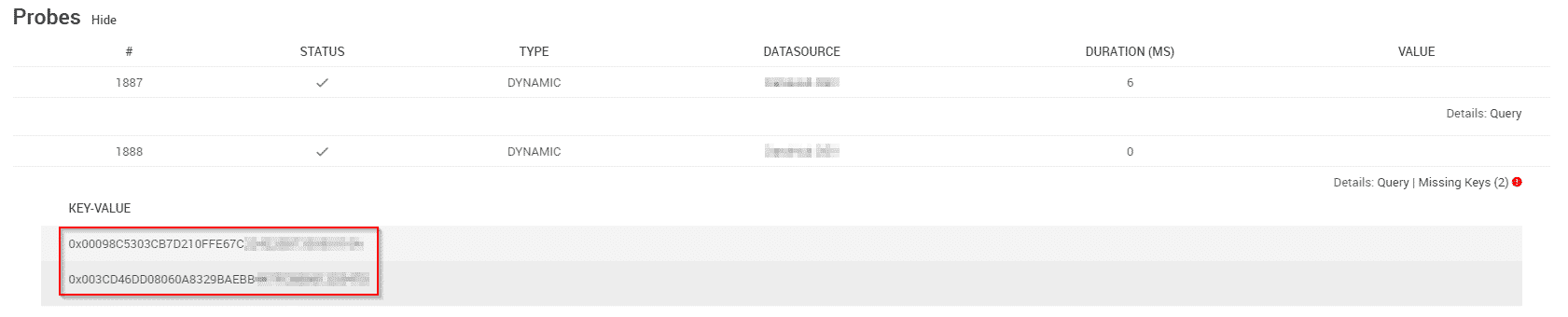

At point (2) there were data inconsistencies in the data integration process. Three relationships had one or more references that couldn't be resolved. One of them was resolved at point (3) . The other one are display as following in the test results:

When drilling down into the test results, you can see the affected hashkeys.

Annex - Scripts for the test case "Satellites to Hubs"

Use the following scripts to implement the other test case for validating satellite to hub relationships.