The Data Warehouse Test Concept

Testing a data warehouse system begins with the creation of a strong but adequate testing concept. What it should cover, how you can build it and what tools are available is shown here.

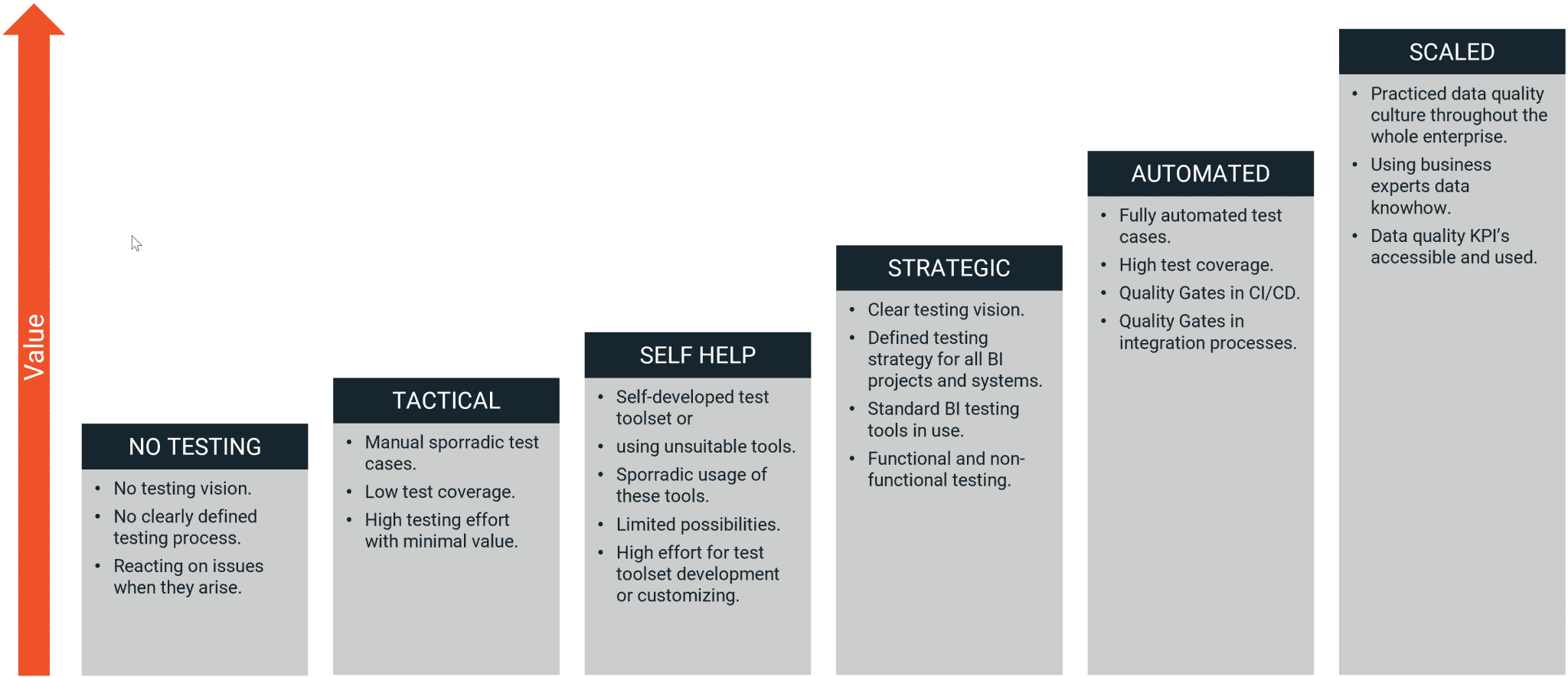

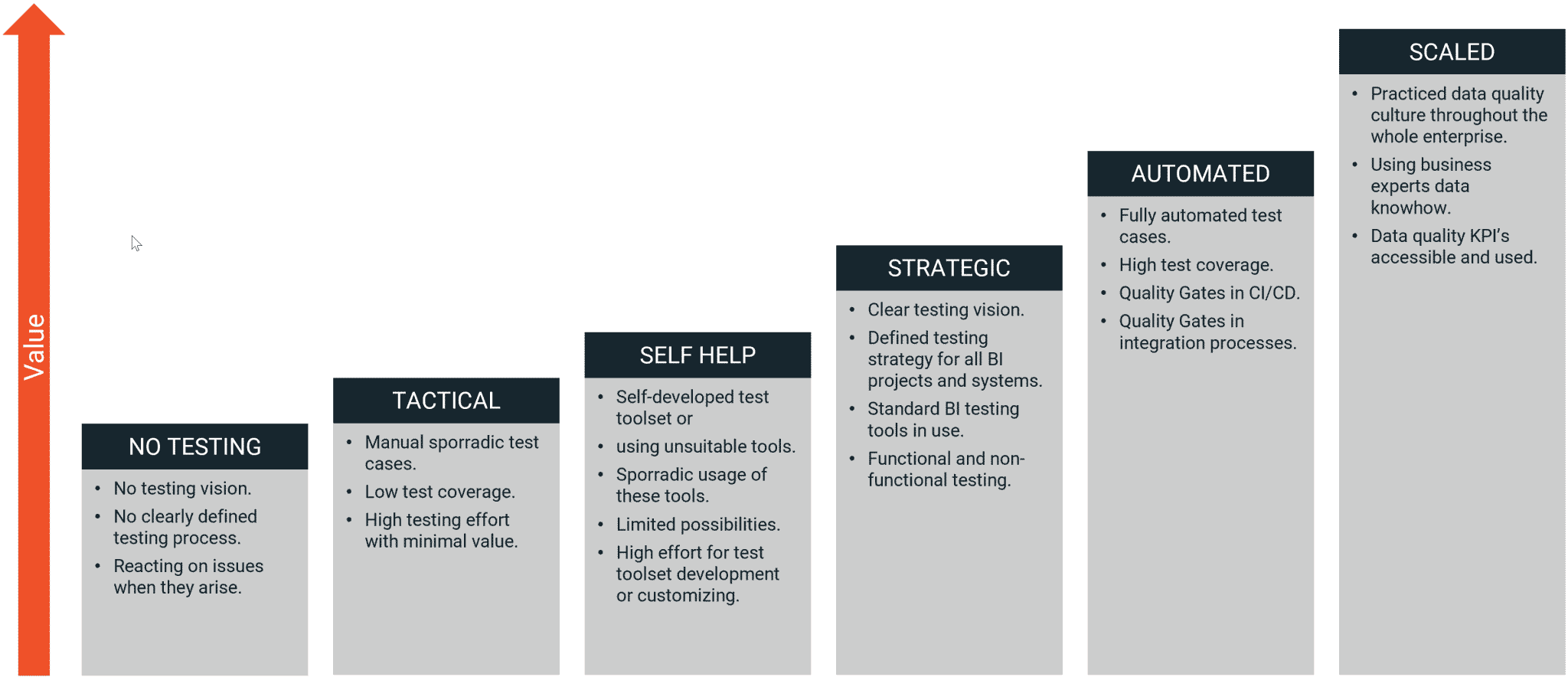

How mature is your testing approach?

Ideally, your data warehouse testing has a clear concept with automated testing tasks. This brings security regarding quality assurance and makes you capable of proving that everything works well.

But many projects follow a tactical approach. They do sporadic testing and react spontaneously when problems come up. And some teams do not test at all, what usually leads to a failed project or at least many firefighting drills that cost a lot of money, time and nerves. Where at the same time, end users are loosing trust.

Maturity Model for Data Warehouse Testing

FREE eBook

You want a Simple and Reliable Data Test Concept?

Download our latest ebook, "Creating a Winning Data Test Concept" and learn how to create a simple yet effective testing strategy.

Environment-oriented quality assurance

There are a couple of touchpoints in separated infrastructure environments, where testing makes sense and is most efficient.

Besides of continuous regression testing, the CI/CD processes, that are responsible for integration and deployment between the environments, are the right place to apply automated testing. This ensures, that only tested components get propagated to another environment and ultimately, to the live environment.

What kind of tests are possible?

To test a data warehouse system, there's no universal recipe. Individual requirements and also budgets lead to different test concepts.

But BiG EVAL provides best practices and templates for a various of different test disciplines that can be used and applied right in your project.

FREE eBook

You want a Simple and Reliable Data Test Concept?

Download our latest ebook, "Creating a Winning Data Test Concept" and learn how to create a simple yet effective testing strategy.

When should you start testing?

The testing effort sums up with each sprint. And if you intend to run the tests manually, you will not have enough time to do all test cases within the planned sprint duration. And in addition to this, you will not get enough test coverage, or you will forgot important test cases if you start testing at the end.

Therefore, the first implementation iteration of your project is the right point to start with building automated regression tests. And doing this with a modern data test automation tool like BiG EVAL is a no-brainer.